Google has launched an AI experiment for you to goof around with. Move Mirror as Google calls it, will be able to track your movements while you’re dancing in front of the webcam and match your poses with hundreds and thousands of pictures on the web. Google says it’ll be using over 80,000 images of people doing various actions like cooking, skiing and clip them adjacent to the real-time video of the person striking the poses.

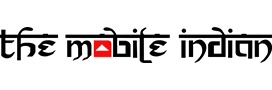

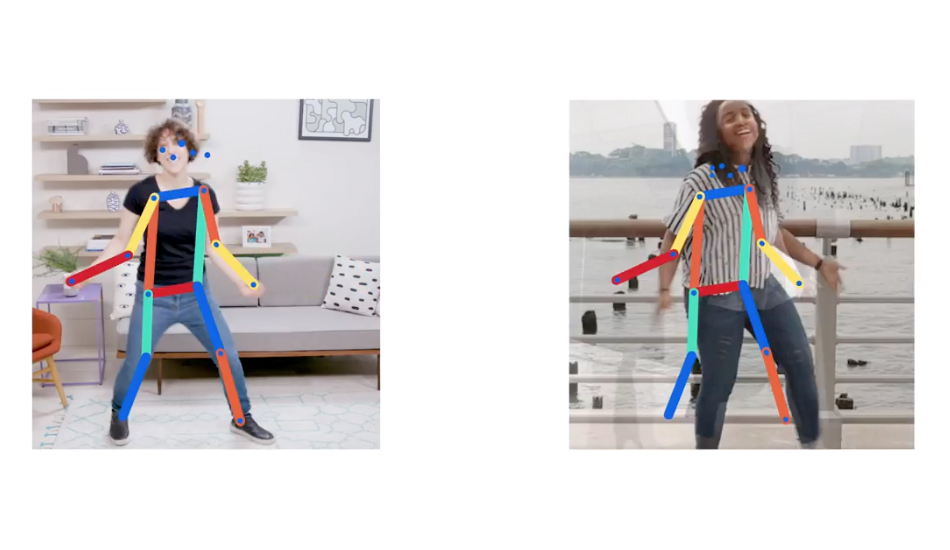

All you need to do is grant GoogleMove Mirror access to your webcam and start posing around, let Google match you up with their bunch of pictures and save the result as GIF. The experiment employs a machine learning model called Potent which detects the poses of a human subject by analysing the position of the parts of the human body and thus track their movement.

Once tracked, Move Mirror will match the closest match to each of your poses and will line these images next to your own as you move around, in real-time, which are strung together like a flipbook. The result, you ask? A video that plays different people imitating you while you pose in front of your camera. You can also make a gif and share it with your friends online.

While the experiment is created for fun, Move Mirror shows how advanced machine learning techniques will soon be able to understand and extract data from a webcam or a smartphone camera and detect your poses. Move Mirror can be used in various fields like teaching yoga or while learning various types of dances. All of this possible without any privacy converts and no third party client will be able to extract data off your smartphone camera or the webcam.

Creative Technologist at Google Creative Lab, Irene Alvarado said “With Move Mirror, we’re showing how computer vision techniques like pose estimation can be available to anyone with a computer and a webcam. We also wanted to make machine learning more accessible to coders and makers by bringing pose estimation into the browser—hopefully inspiring them to experiment with this technology.”