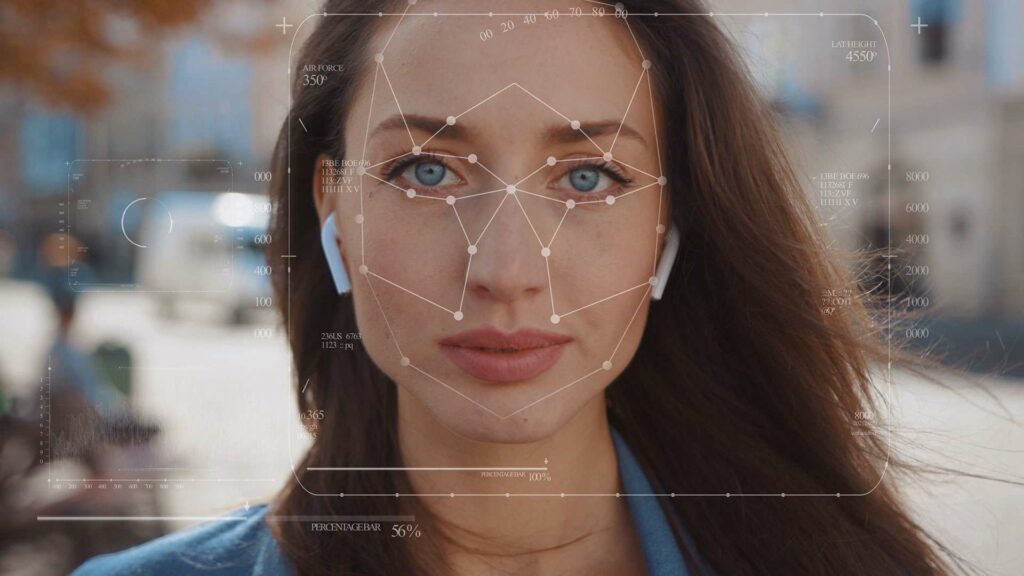

In recent years, deepfakes have become more and more popular around the internet — pieces of digital media that take an image or video and use someone else’s face or voice to create a new one that isn’t real. You might have already seen one without even realizing it.

The widespread popularity of deepfakes has allowed many instances of misinformation and scams to permeate the internet. Intel, in response, announced a new technology called “FakeCatcher” that can detect deepfake media with a 96% accuracy rate.

What is Deepfake?

Deepfake technology is a way of creating fake images, videos, and audio. This technology is based on machine learning and artificial intelligence algorithms. It can be used for good, such as creating realistic character animations for movies.

However, it can also be used for evil, such as creating fake videos of people saying or doing things they never said or did. This technology is becoming more and more realistic and sophisticated, to the point where it is becoming hard to tell what is real and what is not. This is causing problems because people are starting to believe things that are not true.

What are the dangers of deepfake technology?

Deepfake technology is still in its infancy, but it can potentially be dangerous. Because deepfakes can be used to create realistic and convincing audio and video of people saying and doing things they never said or did, they could be used to spread false information or to create fake news. Deepfakes could also be used to create fake evidence in court cases or to make someone appear to confess to a crime they didn’t commit. In the hands of a skilled user, deepfake technology could be used for malicious purposes.

Ironically, Deepfakes are an impressive example of machine learning and artificial intelligence in action. This technology can create terrifyingly accurate impersonations of celebrities and politicians doing things they’ve never done or said.

How does Intel’s FakeCatcher work?

Intel’s newly developed technology will catch deepfakes in real time by analyzing human blood flow in the pixels of a video. It is different from other fake identifying tools, which mainly look at raw data to try to find signs of inauthenticity and identify what is wrong with a video.

Intel’s tech can identify changes in blood colour when it circulates throughout the body. Signals of that blood flow are then collected and translated into data by AI algorithms to help determine if a video is legitimate.

There are several potential use cases for FakeCatcher, as per Intel. Social media platforms could leverage the technology to prevent users from uploading harmful deepfake videos. Global news organizations could use the detector to avoid inadvertently amplifying manipulated videos. And nonprofit organizations could employ the platform to democratize the detection of deepfakes for everyone.

Read More:

WhatsApp launches ‘Checkpoint Tipline’ to intercept fake news

Google Pay ban in India fake news spreads fire on social media

How can you spot a deepfake video?

There are a few key things to look for when trying to spot a deepfake video. First, pay close attention to the lips. Often, deepfake videos will have mismatched lips, as the facial mapping technology is not yet perfect. Second, look for any unnatural or jerky movements. Deepfake videos often have slight discrepancies in movement, as the artificial intelligence used to create them is not yet able to perfectly mimic human movement.

Finally, listen for any strange audio cues. Deepfake videos can sometimes have strange background noises or choppy audio, as the technology is not yet able to perfectly replicate human speech. If you see any of these red flags, there’s a good chance you’re looking at a deepfake video.

What can be done to stop deepfakes?

There are many ways to stop deepfakes, but the most effective is probably simply not creating them in the first place. If you create deepfakes, consider the potential harm your fake could do and refrain from creating it.

If you come across a deepfake, you can report it to the website or social media platform where it’s hosted. Most platforms have policies against deepfakes and will remove them if they’re reported.

You can also help spread awareness about deepfakes and their dangers. Many people are unaware of the dangers of deepfakes and may unwittingly share them. By educating yourself and others about deepfakes, you can help stop their spread.

Final Thoughts

Deepfake technology is certainly something to watch out for in the future. While it can be used for good, as we’ve seen with its use in movies and TV shows, it can also be used for bad. Deepfakes can be used to create fake news stories or to create false evidence against someone.

As this technology gets more sophisticated, it will become harder and harder to tell what’s real and what’s not. So, be careful out there and don’t believe everything you see on the internet!