Facebook wants to contain the repeated spread of misinformation on its platform and as a result, the platform has announced that it will take strict actions against those who repeatedly share false information on the social media platform.

The company is using its fact-checker to verify which page and information is authentic. Whether it’s false or misleading content about COVID-19 and vaccines, climate change, elections or other topics, Facebook wants to make sure fewer people see misinformation on its apps.

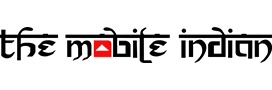

Facebook will now give people more information before they like a Page that has repeatedly shared content that fact-checkers have rated, by showing a pop up if you go to like one of these Pages.

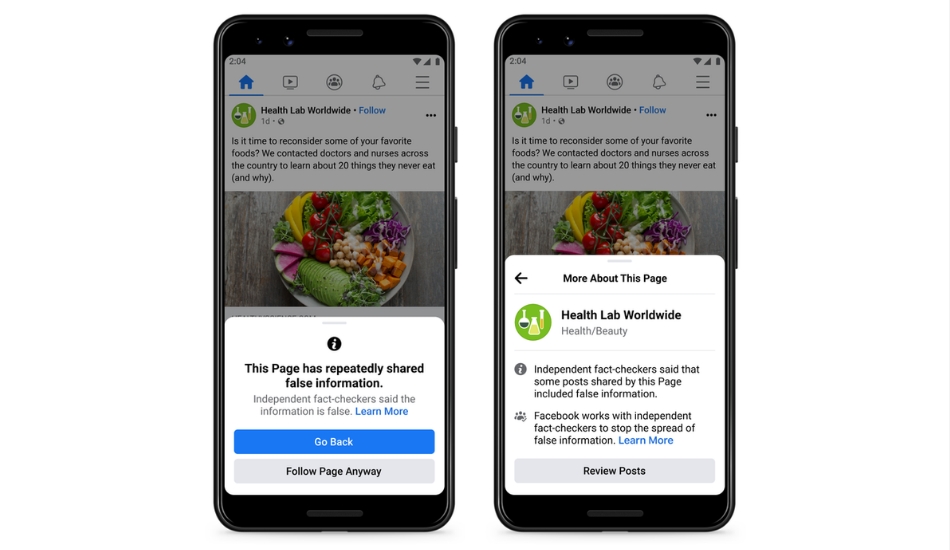

Facebook has now also started to reduce the distribution of all posts in News Feed from an individual’s Facebook account if they repeatedly share content that has been rated by one of its fact-checking partners.

Facebook now also has redesigned notifications for when people share fact-checked content. “The notification includes the fact-checker’s article debunking the claim as well as a prompt to share the article with their followers. It also includes a notice that people who repeatedly share false information may have their posts moved lower in News Feed so other people are less likely to see them”, says Facebook.