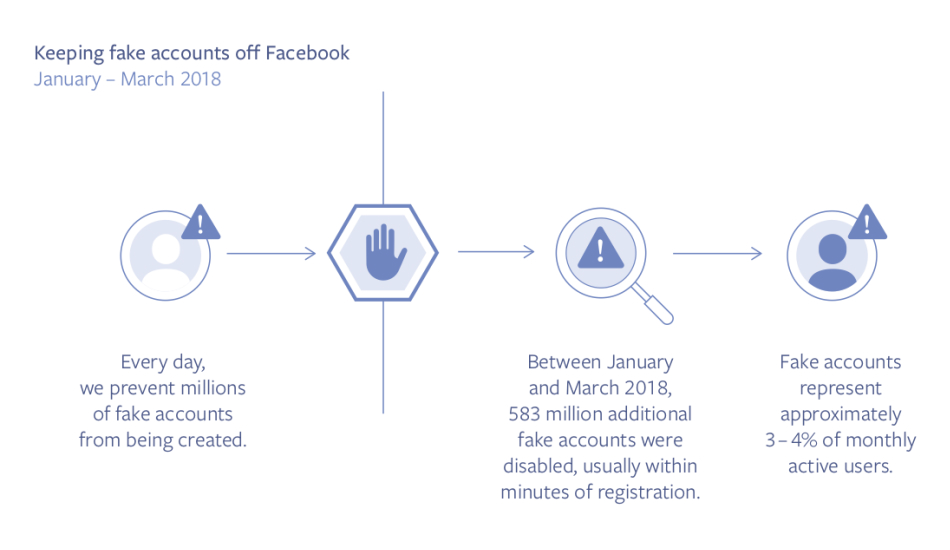

In its quarterly report on Community Standards Enforcement, Facebook has revealed that it has cut down on spam, hate speeches, violence and adult nudity by axing 583 million fake accounts. This also covers for 837 million spam posts that were published on the social media of which 1.9 million encouraged terrorism, hate speeches amounting to 2.5 million posts and 21 million posts on sexual activity.

Facebook claims that it can now detect every spam post and through artificial intelligence, it will be able to remove posts without a moment’s delay if it propagates terrorism, violence or sexual content on its website. The announcement comes after the social media giant suspended over 200 apps across its platform which explicitly used a user’s private data without their knowledge.

The report also addressed that users will be notified about the detection of these flagged posts and this amounts to 85.6 percent of the user base. It also added that 22 to 27 posts in 10,000 amounted to graphic violence. Enhanced photodetection process has also helped Facebook delete 1.9 million posts that spread terrorist propaganda without affecting users on a major scale.

Though this amount of transparency from Facebook is hugely acknowledged, it goes on to show the sheer extent of misinformation, fake accounts, and abusive content, the company is currently dealing with. While Facebook’s facial recognition software might have evolved in the long run, it could only flag 38 percent of hate speeches that have been spread across its platform. This means, the social media company still relies on its users and reviewers to check up on hate speeches and it will take some time for its AI to learn sarcasm and detect abusive hate speeches.

The report that went live yesterday compares much of Facebooks action against spam from Q4 2017 to Q1 2018. It has clarified that reports of this kind will be generated every six months and will provide an overview of the community standards set by the platform.