Apple today announced new software features designed for people with mobility, vision, hearing, and cognitive disabilities. The new update with these features will roll out later this year through a software update. People with limb differences will be able to navigate Apple Watch using AssistiveTouch; iPad will support third-party eye-tracking hardware for easier control; and for blind and low vision communities, Apple’s VoiceOver screen reader will use on-device intelligence to explore objects within images.

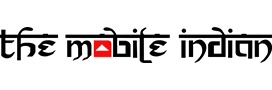

Apple is also launching a new service on Thursday, May 20, called SignTime. This enables customers to communicate with AppleCare and Retail Customer Care by using American Sign Language (ASL) in the US, British Sign Language (BSL) in the UK, or French Sign Language (LSF) in France, right in their web browsers. This service will initially launch in the US, UK, and France while Apple plans to expand it to more countries in the near future.

AssistiveTouch for watchOS allows users with upper body limb differences to use Apple Watch without ever having to touch the display or controls. Apple is using built-in motion Sensors like the gyroscope and accelerometer, along with the optical heart rate sensor and on-device machine learning to detect subtle differences in muscle movement and tendon activity, which lets users navigate a cursor on the display through a series of hand gestures, like a pinch or a clench.

You can answer incoming calls, control an onscreen motion pointer, and access Notification Center, Control Center, and more with AssistiveTouch on Apple Watch.

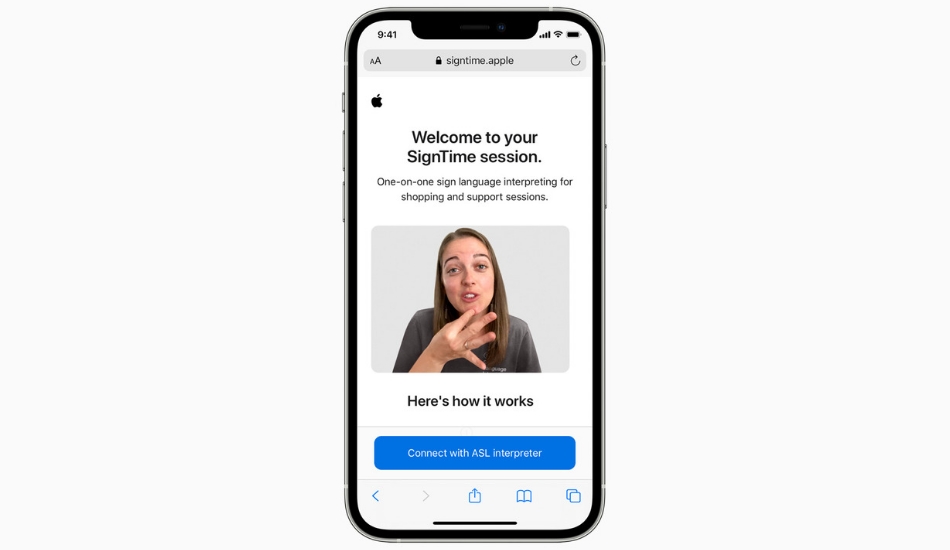

With VoiceOver, users can navigate a photo of a receipt like a table: by row and column, complete with table headers. VoiceOver has powerful capabilities that can also describe a person’s position along with other objects within images, so people can relive memories in detail, and with Markup, users can add their own image descriptions to personalize family photos.

With eye-tracking support, iPad OS will now support third-party eye-tracking devices, making it possible for people to control iPad using just their eyes. Later this year, compatible MFi devices will track where a person is looking onscreen and the pointer will move to follow the person’s gaze.

Apple is also adding support for new bi-directional hearing aids. The microphones in these new hearing aids enable those who are deaf or hard of hearing to have hands-free phone and FaceTime conversations. Apple says the next-generation models from MFi partners will be available later this year.

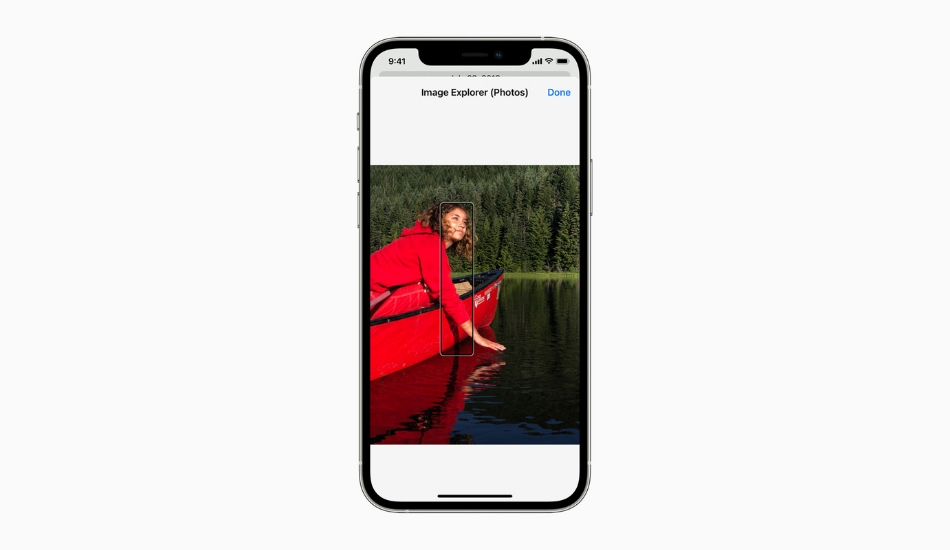

“Apple is also introducing new background sounds to help minimize distractions and help users focus, stay calm, or rest. Balanced, bright, or dark noise, as well as ocean, rain, or stream sounds continuously play in the background to mask unwanted environmental or external noise, and the sounds mix into or duck under other audio and system sounds”, says Apple.

Some additional features coming later this year will include new Memoji customizations which better represent users with oxygen tubes, cochlear implants, and a soft helmet for headwear. The display and text size settings will be customizable on a per-app basis. Sound Actions for Switch Control will replace physical buttons and switches with mouth sounds such as a click, pop, or “ee” sound for users who are non-speaking and have limited mobility.

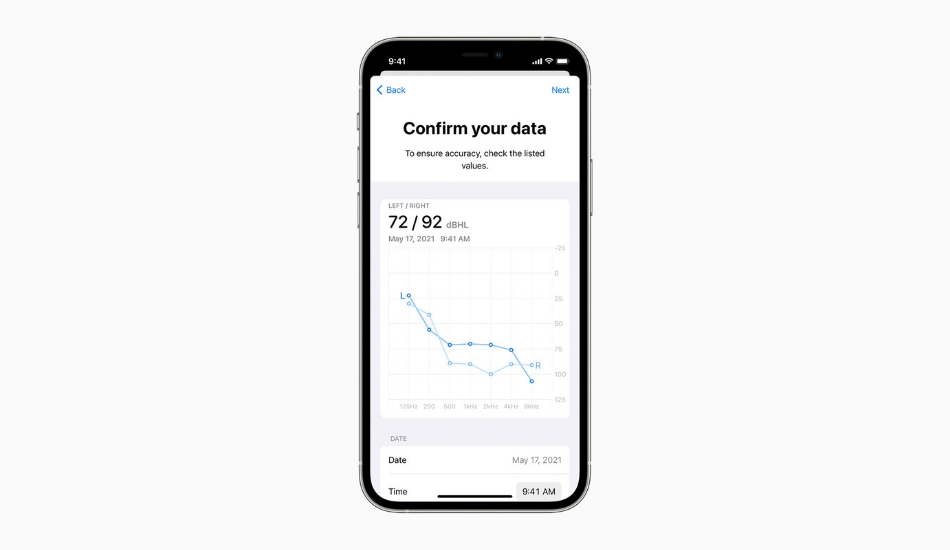

Apple is also bringing support for recognizing audiograms. These are charts that show the results of a hearing test, to Headphone Accommodations. Headphone Accommodations amplify soft sounds and adjust certain frequencies to suit a user’s hearing.