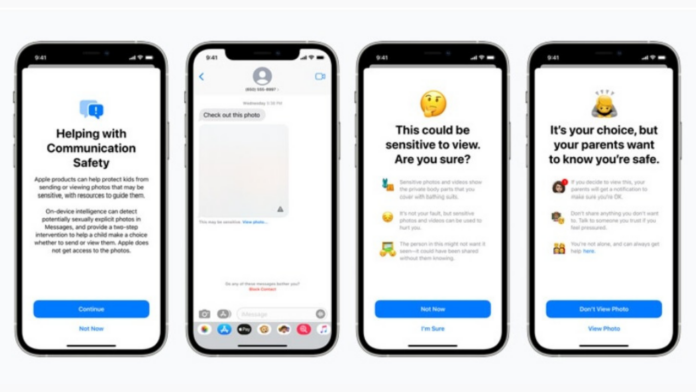

Apple has announced that it is introducing new child safety features in three areas, developed with child safety experts. The new child safety features from Apple aim to limit Child Sexual Abuse Materials (CSAM). In addition, the new communication tools will enable parents to play a more informed role in helping their children navigate communication online.

First, Apple says that the new Messages app will use on-device machine learning to warn about sensitive content. This will be done by keeping private communications unreadable by Apple. One of the other new features will be scanning the iPhones and iPads for CSAM. If found, it will then report them to the National Center for Missing and Exploited Children (NCMEC).

Siri and Search are also being updated to intervene when users perform searches for queries related to CSAM. These interventions will explain to users that interest in this topic is harmful and problematic. In addition, Apple will provide resources from partners to get help with this issue. These updates to Siri and Search will arrive later this year in iOS 15, iPadOS 15, watchOS 8, and macOS Monterey.

How scanning for CSAM on iPhones work?

To be specific, instead of scanning images in the cloud, the system will perform on-device matching using a database of known CSAM image hashes provided by NCMEC and other child safety organizations. Apple will further transform this database into an unreadable set of hashes securely stored on users’ devices. Apple says the system has been designed with user privacy in mind.

Read More: Simplified: Spatial Audio with Dolby Atmos for Apple Music (iOS, Mac)

This means that when a user uploads an image to their iCloud storage, the iPhone will create a hash of the image. Then, it will be uploaded and compared against the database created by NCMEC to cross-check for CSAM materials. Apple says that this matching process is powered by a cryptographic technology called private set intersection. This technology determines if there is a match without revealing the result.

If an account is flagged, it then goes under human review before reporting it to the authority. It then disables the user’s account and sends a report to NCMEC. If a user feels their account has been mistakenly flagged, they can appeal to reinstate their account.

Concerns raised against CSAM detection on iPhones

A recent report from Financial Times shows concerns raised by various researchers regarding the new CSAM detection by Apple. They say that Apple’s system can be misused for surveillance which will put the data of millions of pf people at risk. Apple has “sent a very clear signal. In their (very influential) opinion, it is safe to build systems that scan users’ phones for prohibited content,” Matthew Green, a security researcher at Johns Hopkins University, warned.

Though, the researchers support Apple’s initiative to limit the spread of CSAM. But at the same time, they are also concerned that governments worldwide may misuse the tool to gain access to citizen’s data. Ross Anderson, Professor of Security Engineering at the University of Cambridge, said, “It is an absolutely appalling idea because it is going to lead to distributed bulk surveillance of… our phones and laptops”.

While the system from Apple is currently targeted at spotting CSAM materials, it may also be set to scan various other types of images, such as anti-government signs. This will result in a powerful tool for a government that wants more control over the nation.